Please fill out the fields below so we can help you better. Note: you must provide your domain name to get help. Domain names for issued certificates are all made public in Certificate Transparency logs (e.g. https://crt.sh/?q=example.com), so withholding your domain name here does not increase secrecy, but only makes it harder for us to provide help.

My domain is: numerous, but including e.g. annemiller.uk (renewal attempted at 2019-10-03 06:21 BST)

I ran this command: “dehydrated -c”

It produced this output:“ERROR: Problem connecting to server (post for https://acme-v02.api.letsencrypt.org/acme/chall-v3/610799063/GogFSA; curl returned with 35)”

My web server is (include version): various, mostly Apache 2.4 and Apache 2.2

The operating system my web server runs on is (include version): various, but including Debian Stretch, Raspbian Stretch, SLES and Centos

My hosting provider, if applicable, is: various

I can login to a root shell on my machine (yes or no, or I don’t know): yes

I’m using a control panel to manage my site (no, or provide the name and version of the control panel): no

The version of my client is (e.g. output of certbot --version or certbot-auto --version if you’re using Certbot): recently updated https://github.com/lukas2511/dehydrated (I’ve been using this on lots of different servers since LetsEncrypt started)

About a week ago, I started getting errors from the client, which were to do with the case of headers coming from LE having changed, so a grep needed changing to “grep -i” to check them case-insensitively. This problem had already been fixed in the client so I updated everything. However, it looks to me like the reason it started failing is because there has been a major change at the LE end, perhaps switching to HTTP/2 (and the case sensitivity of headers was simply a minor symptom of that)?

Since then I have sometimes been getting curl error 35 (according to https://curl.haxx.se/libcurl/c/libcurl-errors.html error 35 is: “CURLE_SSL_CONNECT_ERROR (35) A problem occurred somewhere in the SSL/TLS handshake. You really want the error buffer and read the message there as it pinpoints the problem slightly more. Could be certificates (file formats, paths, permissions), passwords, and others.”)

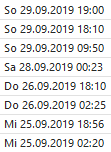

It fails randomly on several different servers running different web servers and operating systems, different LE verification types (DNS and HTTP), some with IPv4 only, some with IPv6 only and some with both, and at different times in the process (mostly when there is nothing actually to renew: I assume there is an account and/or license check at the start; but also sometimes part way through the process). If I rerun it manually when I get to see the error, it works. In the example above it got 5 challenge tokens successfully, checked the DNS tokens on two of them and failed to connect on the third. It seems completely random, but I’m guessing it is failing to connect on maybe 1 in 30 attempts. I’ve had at least one failure on 3 of the last 7 days. This morning I got 3 failures from 3 different servers out of 19 servers/protocol combinations in all.

Because of the diversity, this looks to me like a problem at LE end, or possibly a general problem in curl when interacting with the new configuration of the web server at LE. I don’t think it is the dehydrated client, as that is just using cURL to do its communication with LE, and it randomly fails in only maybe 3% of cases at different points in the process.

Any ideas?